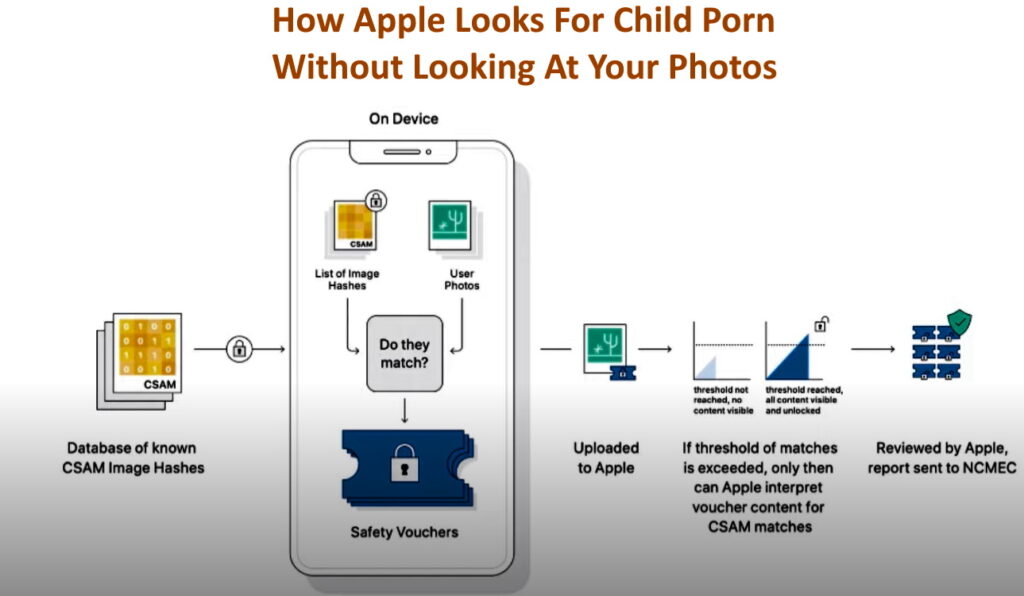

In the first half of 2021 Apple announced they would begin checking photos on Apple devices for child pornography and many privacy advocates became concerned that Apple was overreaching. On August 5th Apple explained the details in highly technical terms that most people can’t understand. So we decided to simplify the process to four steps everyone can understand:

- Apple devices now mathematically generate a unique number (like a serial number) for every photo

- Apple devices now contain the list of mathematically generated unique numbers (think serial numbers) for known child pornographic images

- Apple devices now compare those child porn unique numbers to your numbers and if there is a match, you very likely have child pornography on your device

- If you have too many of these matches, and Apple is not saying how many that is, Apple will:

- decrypt the photos that were flagged

- have a human Apple staffer look at the pictures to confirm they are child porn

- report confirmed child pornography to an agency that will re-confirm before notifying the police

CLICK TO EXPAND GRAPHIC

The formula that is used to create the the serial numbers is smart enough to adjust for minor changes to an image, like cropping the edge off to make the photo smaller or changing its resolution.

What Is Apple Doing That Others Aren’t?

What Apple is doing is moving the check for child pornography from their cloud services to your local device. This will catch many more child predators and will reduce the work Apple’s cloud servers have to do.

Are Tech Companies Checking My Photos for Porn?

In a word, no. Virtually all of the tech companies you can think of, Google, Apple, Microsoft… do the same mathematical comparison looking for known CHILD porn images when photos are uploaded to their cloud services (think iCloud, OneDrive, Google Drive…)

Where Does The List Of Known Child Pornographic Images Come From?

The list of know child porn images is managed by the good people at the National Center For Missing & Exploited Children (aka NCMEC).

What Does URTech.ca Think About Apple’s Scanning?

While this technology easily could be extended to peer inside your phone / tablet and see what is happening, today, with this limited use URTech.ca thinks it is a good idea.

We think that Apple can be trusted to use this technology the way they say they will today. That being said this is the top of a VERY slippery slope and that in the years to come so governments and consumers need to keep a careful eye on Apples use.

Note that we are big supporters of the CSAM Scanning Tool on Cloudflare.

Video Explanation of How Apple Checks for Child Porn

There are many good articles written on the subject and we liked the Daily Tech News Shows version the best. It is clear, easy to understand and discusses the problems with this technology in a serious but rational way.

The best line in this video is:

“… its never the intended use that is the problem; it is the unintended use…”

2 Comments

YouTube Channels About Apple Lifehacks – Partisan Issues · June 29, 2023 at 6:51 pm

[…] Accept these techniques, discover new possibilities, and begin on a digital empowerment path. Allow Apple lifehacks to change how you interact with your […]

YouTube Channels About Apple Lifehacks – Up & Running Technologies, Tech How To's · June 29, 2023 at 6:48 pm

[…] Accept these techniques, discover new possibilities, and begin on a digital empowerment path. Allow Apple lifehacks to change how you interact with your gadgets and make the most of every […]